1:什么是云计算?

云计算是一种按量付费的模式!云计算的底层是通过虚拟化技术来实现的!

2:云计算的服务类型

2.1 IAAS 基础设施即服务(infrastructure as an service) 虚拟机 ecs openstack

2.2 PAAS 平台即服务(platform as an service ) php,java docker容器 +k8s

2.3 SAAS 软件即服务(software as an service ) 企业邮箱服务 cdn服务 rds数据库 开发+运维

3:为什么要用云计算

小公司:10台 20w+ idc 5w + 100M 10W, 10台云主机,前期投入小,扩展灵活,风险小

大公司:闲置服务器计算资源,虚拟机,出租(超卖)

64G 服务器 64台1G 320台1G 64台 大公司自己的业务 264台 租出去

国企,银行

公有云: 谁都可以租

私有云: 只有公司内部使用

混合云: 有自己的私有云 + 租的公有云

4:云计算的基础KVM虚拟化

宿主机:内存4G+ 纯净的系统CentOS-7

4.1:什么是虚拟化?

虚拟化,通过模拟计算机的硬件,来实现在同一台计算机上同时运行多个不同的操作系统的技术。

4.2 :虚拟化软件的差别

linux虚拟化软件:

qemu 软件纯模拟全虚拟化软件,特别慢!兼容性好!

xen(半) 性能特别好,需要使用专门修改之后的内核,兼容性差! redhat 5.5 xen kvm

KVM(linux) 全虚拟机,它有硬件支持cpu,基于内核,而且不需要使用专门的内核 centos6 kvm

性能较好,兼容较好

vmware workstations: 图形界面

virtual box: 图形界面 Oracle vmware workstations: 图形界面

virtual box: 图形界面 Oracle

4.3 安装kvm虚拟化管理工具

| 主机名 | ip地址 | 内存 | 虚拟机 |

|---|---|---|---|

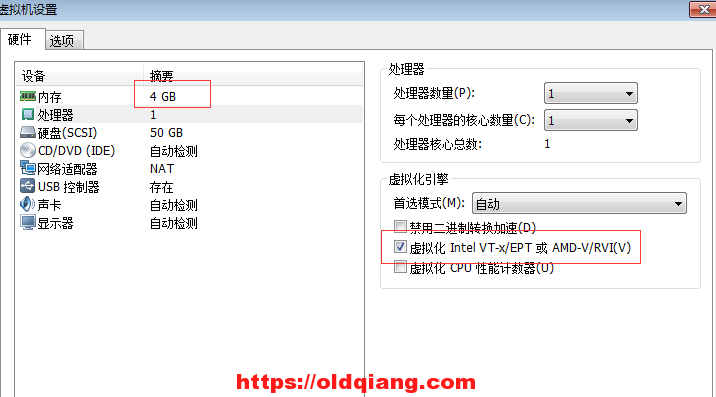

| kvm01 | 10.0.0.11 | 4G(后期调整到2G) | cpu开启vt虚拟化 |

| kvm02 | 10.0.0.12 | 2G | cpu开启vt虚拟化 |

KVM:Kernel-based Virtual Machine

yum install libvirt virt-install qemu-kvm -yKVM:Kernel-based Virtual Machine

libvirt 作用:虚拟机的管理软件 libvirt: kvm,xen,qemu,lxc….

virt virt-install virt-clone 作用:虚拟机的安装工具和克隆工具 qemu-kvm qemu-img (qcow2,raw)作用:管理虚拟机的虚拟磁盘

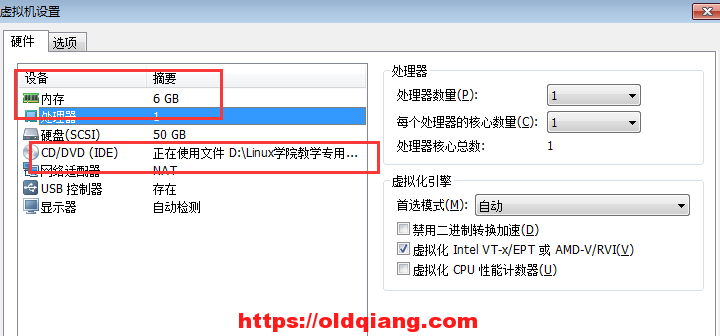

环境要求:

centos 7.4 7.6

vmware 宿主机 kvm虚拟机

内存4G,cpu开启虚拟化

IP:10.0.0.11

echo ‘192.168.12.201 mirrors.aliyun.com’ >>/etc/hosts

curl -o /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo安装软件包

yum install libvirt virt-install qemu-kvm -y

4.4:安装一台kvm虚拟机

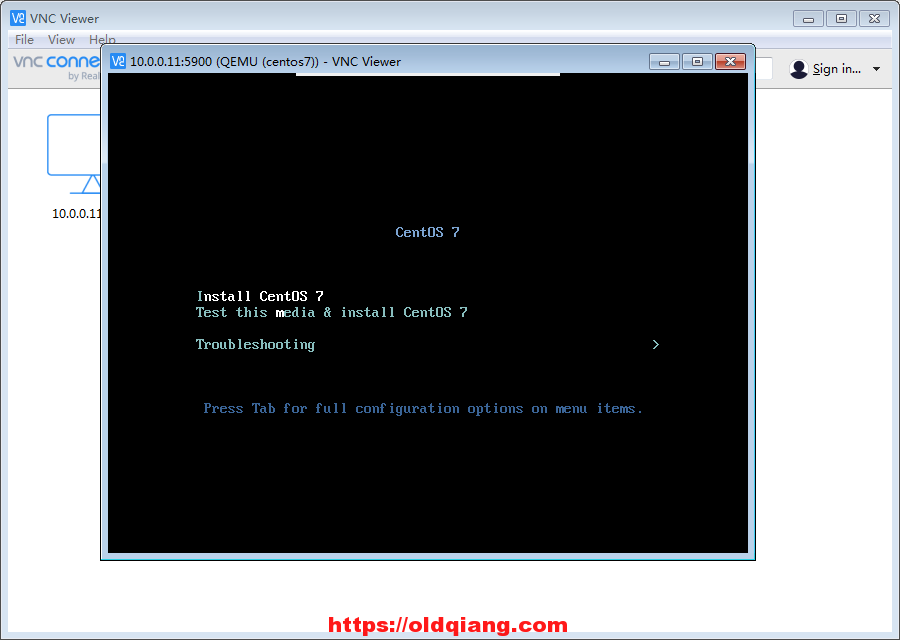

VNC-Viewer-6.19.325

宿主机

微软的远程桌面

vnc:远程的桌面管理工具

向日葵

微软的远程桌面

systemctl start libvirtd.service

systemctl status libvirtd.service

10.0.0.11 宿主机

建议虚拟机内存不要低于1024M,否则安装系统特别慢!

------------------------

上传一个镜像镜像安装导入 注意镜像放在opt 下 root 下没有权限: 原因在于 使用kvm 的普通用户

virt-install --virt-type kvm --os-type=linux --os-variant rhel7 --name centos7 --memory 1024 --vcpus 1 --disk /opt/centos2.raw,format=raw,size=10 --cdrom /opt/CentOS-7-x86_64-DVD-1708.iso --network network=default --graphics vnc,listen=0.0.0.0 --noautoconsole

virt-install --virt-type kvm --os-type=linux --os-variant rhel7 --name centos7 --memory 1024 --vcpus 1 --disk /opt/centos2.raw,format=qum2,size=10 --cdrom /opt/CentOS-7-x86_64-DVD-1708.iso --network network=default --graphics vnc,listen=0.0.0.0 --noautoconsole

参数介绍

vnc:10.0.0.11:5900

--virt-type kvm 虚拟化的类型(qemu)

--os-type=linux 系统类型

--os-variant rhel7 系统版本

--name centos7 虚拟机的名字

--memory 1024 虚拟机的内存

--vcpus 1 虚拟cpu的核数

--disk /opt/centos2.raw,format=raw,size=10 #磁盘位置大小10G

--cdrom /opt/CentOS-7-x86_64-DVD-1708.iso #镜像的位置

--network network=default 使用默认NAT的网络

--graphics vnc,listen=0.0.0.0 #监听的端口

--noautoconsole #

系统建议装双语言jekins 优化汉语

云主机都没有swap分区

4.5:kvm虚拟机的virsh日常管理和配置

列表list(--all)

[root@kvm01 /opt] # virsh list --all

开机start

[root@kvm01 /opt] # virsh start centos7

关机shutdown(虚拟机有系统)

[root@kvm01 /opt] # virsh shutdown centos7

centos 6 无法关键 缺少软件 acpid

拔电源关机destroy

[root@kvm01 /opt] # virsh destroy centos7

重启reboot(虚拟机有系统)

[root@kvm01 /opt] # virsh reboot centos7

导出配置dumpxml 例子:virsh dumpxml centos7 >centos7-off.xml

[root@kvm01 virsh dumpxml centos7 > vm_centos7.xml

删除undefine 推荐:先destroy,在undefine #默认删除配置文件,磁盘文件纯在

[root@kvm01]/opt]virsh destroy centos7

[root@kvm01 /opt] # virsh undefine centos7

导入配置define

[root@kvm01 /opt] # virsh define /opt/vm_centos7.xml

ll /etc/libvirt/qemu/autostart/ #系统配置文件

[root@kvm01 /opt] # virsh start centos7

修改配置edit(自带语法检查)

[root@kvm01 /opt] # virsh edit centos7

重命名domrename (低版本不支持)

挂起suspend 虚拟机

[root@kvm01 /opt] # virsh suspend centos7

恢复resumehis 虚拟机

[root@kvm01 /opt] # virsh resume centos7

查询vnc端口号vncdisplay

[root@kvm01 /opt] # virsh vncdisplay centos7

:0

ps -ef 端口

-----------

kvm虚拟机开机启动

kvm运行业务程序

开机启动autostart,前提:systemctl enable libvirtd;

[root@kvm01 /opt] # systemctl enable libvirtd

[root@kvm01 /opt] # virsh autostart centos7

Domain centos7 marked as autostarted

--测试

[root@kvm01 /opt] # systemctl stop libvirtd

[root@kvm01 /opt] # systemctl start libvirtd

[root@kvm01 /opt] # virsh list --all

Id Name State

----------------------------------------------------

1 centos7 running

取消开机启动autostart --disable

[root@kvm01 /opt] # virsh autostart --disable centos7

查看那些开机自动启动了

[root@kvm01 /opt] # ll /etc/libvirt/qemu/autostart/

lrwxrwxrwx 1 root root 29 May 21 17:46 centos7.xml -> /etc/libvirt/qemu/centos7.xml

[root@kvm01 /opt] # virsh list --autostart

修改名字——以及升级最大内存当前内存

关机

[root@kvm01 /opt] # virsh shutdown centos7

改名字

[root@kvm01 /opt] # virsh domrename centos7 centos3

virsh edit centos7

155 virsh dumpxml centos7 grep raw

156 virsh dumpxml centos7 |grep ra

需要mv 配置文件以及磁盘文件名字

[root@kvm01 /opt] # virsh dumpxml centos7 | grep -n 'raw'

43: <driver name='qemu' type='raw'/>

44: <source file='/opt/centos7.raw'/>

kvm 虚拟机主要有磁盘文件 和配置文组成 : 可以用于升级:迁移console 控制台 登录

[root@kvm01 /opt] # ssh 192.168.122.85

[root@localhost ~]# grubby --update-kernel=ALL --args="console=ttyS0,115200n8"

[root@localhost ~]# reboot

centos7的kvm虚拟机:

grubby --update-kernel=ALL --args="console=ttyS0,115200n8"

reboot

登录-----

[root@kvm01 /opt] # virsh console centos7

Connected to domain centos7

Escape character is ^]

CentOS Linux 7 (Core)

Kernel 3.10.0-693.el7.x86_64 on an x86_64

localhost login: root

Password:

Last login: Thu May 21 17:57:56 from 192.168.122.1

[root@localhost ~]#

ctrl + ] 回到主机

作业1:实现centos6的kvm虚拟机,console命令行登录? 安装一台centos6的kvm虚拟机,在安装的过程中.内核参数selinux=0

4.6:kvm==虚拟机虚拟磁盘==管理和快照管理

raw: 裸格式,占用空间比较大,不支持快照功能,不方便传输 ,读写性能较好 总50G 占用5G,传输50G

qcow2: qcow(copy on write)占用空间小,支持快照,性能比raw差一点,方便传输 总50G 占用5G,传输5G

===

virt-install --virt-type kvm --os-type=linux --os-variant rhel7 --name centos7 --memory 1024 --vcpus 1 --disk /opt/centos2.raw,format=raw,size=10 --cdrom /opt/CentOS-7-x86_64-DVD-1708.iso --network network=default --graphics vnc,listen=0.0.0.0 --noautoconsole

virt-install --virt-type kvm --os-type=linux --os-variant rhel7 --name centos7 --memory 1024 --vcpus 1 --disk /data/oldboy.qcow2,format=qcow2,size=10 --cdrom /data/CentOS-7.2-x86_64-DVD-1511.iso --network network=default --graphics vnc,listen=0.0.0.0 --noautoconsole4.6.1磁盘工具的常用命令

qemu -img info,create,resize,convert

查看虚拟磁盘信息

[root@kvm01 /opt] # qemu-img info centos7.raw

image: centos7.raw

file format: raw

virtual size: 10G (10737418240 bytes)

disk size: 1.1G

创建一块qcow2格式的虚拟硬盘:

qemu-img create -f qcow2 test.qcow2 2G

调整磁盘磁盘容量

qemu-img resize test.qcow2 +20G

注意关机转换

raw转qcow2:qemu-img convert -f raw -O qcow2 oldboy.raw oldboy.qcow2

-c 压缩修改为qcow2可以快照

关机[root@kvm01 /opt] # virsh shutdown centos7

[root@kvm01 /opt] virsh edit centos7

[root@kvm01 /opt] virsh dumpxml centos7 |grep qcow2

[root@kvm01 /opt] virsh start centos7

[root@kvm01 /opt] virsh console centos7 virsh edit web01:

查看虚拟磁盘信息 qemu-img info test.qcow2

创建一块qcow2格式的虚拟硬盘: qemu-img create -f qcow2 test.qcow2 2G

调整磁盘磁盘容量 qemu-img resize test.qcow2 +20G

raw转qcow2:qemu-img convert -f raw -O qcow2 oldboy.raw oldboy.qcow2 -c 压缩 virsh edit web01:

<disk type='file' device='disk'>

<driver name='qemu' type='qcow2'/>

<source file='/data/centos2.qcow2'/>

<target dev='vda' bus='virtio'/>

<address type='pci' domain='0x0000' bus='0x00' slot='0x06' function='0x0'/>

</disk>virsh destroy web01

virsh start web01

4.6.2快照管理

创建快照virsh snapshot-create-as centos7 --name install_ok

[root@kvm01 /opt] # virsh snapshot-create-as centos7 --name 2ok_

查看快照virsh snapshot-list centos7

[root@kvm01 /opt] # virus

Name Creation Time State

------------------------------------------------------------

2ok_ 2020-05-22 10:34:24 +0800 running

youhua_ok 2020-05-22 10:24:55 +0800 running

还原快照virsh snapshot-revert centos7 --snapshotname 1516574134

[root@kvm01 /opt] # virsh snapshot-revert centos7 --snapshotname 2ok_

删除快照virsh snapshot-delete centos7 --snapshotname 1516636570

raw不支持做快照,qcow2支持快照,并且快照就保存在qcow2的磁盘文件中4.7:kvm虚拟机克隆

4.7.1:完整克隆

磁盘文件拷贝独立自动挡:

virt-clone --auto-clone -o web01 -n web02 (完整克隆)

[root@kvm01 /opt] # virt-clone --auto-clone -o centos7 -n web01

手动挡:

qemu-img convert -f qcow2 -O qcow2 -c web01.qcow2 web03.qcow2

virsh dumpxml web01 >web02.xml

vim web02.xml

#修改虚拟机的名字

#删除虚拟机uuid

#删除mac地址

#修改磁盘路径

[root@kvm01 /opt] # virsh define web02.xml

virsh define web02.xml

virsh start web02

[root@kvm01 /opt] # virsh start web02

-c 压缩

就是先创建磁盘文件在导入配置文件4.7.2:链接克隆

a:生成虚拟机磁盘文件 qemu-img create -f qcow2 -b web03.qcow2 web04.qcow2

qemu-img create -f qcow2 -b web01.qcow2 web03.qcow2

b:生成虚拟机的配置文件

qemu-img info web03.qcow2

c:导入虚拟机并进行启动测试

virsh dumpxml web01 >web03.xml

vim web03.xml

#修改虚拟机的名字

<name>web03</name>

#删除虚拟机uuid

<uuid>8e505e25-5175-46ab-a9f6-feaa096daaa4</uuid>

#删除mac地址

<mac address='52:54:00:4e:5b:89'/>

#修改磁盘路径

<source file='/opt/web03.qcow2'/>

virsh define web03.xml virsh start web03全自动链接克隆脚本:

[root@kvm01 scripts]# cat link_clone.sh

#!/bin/bash

old_vm=$1

new_vm=$2

#a:生成虚拟机磁盘文件

old_disk=`virsh dumpxml $old_vm|grep "<source file"|awk -F"'" '{print $2}'`

disk_tmp=`dirname $old_disk`

qemu-img create -f qcow2 -b $old_disk ${disk_tmp}/${new_vm}.qcow2

#b:生成虚拟机的配置文件

virsh dumpxml $old_vm >/tmp/${new_vm}.xml

#修改虚拟机的名字

sed -ri "s#(<name>)(.*)(</name>)#\1${new_vm}\3#g" /tmp/${new_vm}.xml

#删除虚拟机uuid

sed -i '/<uuid>/d' /tmp/${new_vm}.xml

#删除mac地址

sed -i '/<mac address/d' /tmp/${new_vm}.xml

#修改磁盘路径

sed -ri "s#(<source file=')(.*)('/>)#\1${disk_tmp}/${new_vm}.qcow2\3#g" /tmp/${new_vm}.xml

#c:导入虚拟机并进行启动测试

virsh define /tmp/${new_vm}.xml

virsh start ${new_vm}4.8:kvm虚拟机的桥接网络

默认的虚拟机网络是NAT模式,网段192.168.122.0/24

虚拟机模版机桥接开始

4.8.1:创建桥接网卡

[root@kvm01 ~] # cat /etc/sysconfig/network-scripts/ifcfg-eth0

DEVICE="eth0"

ONBOOT="yes"

BOOTPROTO="none"

IPADDR="10.0.1.11"

NETMASK="255.255.255.0"

GATEWAY="10.0.1.254"

网卡只留这么多

创建桥接网卡命令

[root@kvm01 ~] # virsh iface-bridge eth0 br0

取消桥接网卡命令

[root@kvm01 ~]# virsh iface-unbridge br0

4.8.2 新虚拟机使用桥接模式

默认NAT模式

virt-install –virt-type kvm –os-type=linux –os-variant rhel7 –name web04 –memory 1024 –vcpus 1 –disk /opt/web04.qcow2 –boot hd -==-network network=default -==-graphics vnc,listen=0.0.0.0 –noautoconsole

桥接模式:==改动==

virt-install –virt-type kvm –os-type=linux –os-variant rhel7 –name web04 –memory 1024 –vcpus 1 –disk /data/web04.qcow2 –boot hd ==–network bridge=br0== –graphics vnc,listen=0.0.0.0 –noautoconsole

问题1:

如果虚拟机获取不到ip地址:

4.8.3 将已有虚拟机网络修改为桥接模式

关机状态下修改虚拟机配置文件:

例如:virsh ed==it w==eb04

[root@kvm01 ~] # virsh stop web04

[root@kvm01 ~] # virsh list --all

Id Name State

----------------------------------------------------

- centos7 shut off

- web01 shut off

- web02 shut off

- web03 shut off

- web04 shut off

[root@kvm01 ~] # virsh edit web04

----------------新

<interface type='bridge'>

<mac address='52:54:00:4b:2a:b7'/>

<source bridge='br0'/>

---------旧的

88 <mac address='52:54:00:07:9d:5c'/>

89 <source network='default' bridge='virbr0'/>

90 <target dev='vnet0'/>

----------

[root@kvm01 ~] # virsh start web04

[root@kvm01 ~] # virsh console web04 b:启动虚拟机,测试虚拟机网络

如果上层没有开启dhcp,需要手动配置ip地址,IPADDR,NATMASK.GATEWAY,DNS1=180.76.76.76

4.9:热添加技术

热添加硬盘、网卡、内存、cpu

4.9.1 kvm热添加硬盘

[root@kvm01 /opt] # qemu-img create -f qcow2 /opt/wbe04_add.qcw2 1G

查看硬盘

[root@kvm01 /opt] # qemu-img info web04_add.qcow2

image: web04_add.qcow2

file format: qcow2

virtual size: 3.0G (3221225472 bytes)

disk size: 196K

cluster_size: 65536

Format specific information:

compat: 1.1

lazy refcounts: false

临时立即生效

[root@kvm01 /opt] # virsh attach-disk web04 /opt/web04add.qcow2 vdb --subdriver qcow2

主机 磁盘文件 盘符 磁盘格式

格式化

root @ localhost ~ 21:23:14 $

mkfs.xfs /dev/vdb

挂载

root @ localhost /mnt 21:24:31 $

mount /dev/vdb /mnt/

一般临时加永久两条参数一起

永久生效(需要重启)

virsh attach-disk web01 /data/web01-add.qcow2 vdb --subdriver qcow2 --config临时剥离硬盘

virsh detach-disk web01 vdb永久剥离硬盘

virsh detach-disk web01 vdb --config扩容:

在虚拟机里把扩容盘的挂载目录,卸载掉

umount /mnt

在宿主机上剥离硬盘

[root@kvm01 /opt] # virsh detach-disk web04 vdb

在宿主机上调整容量qemu-img resize

[root@kvm01 /opt] # qemu-img resize /opt/web04_add.qcow2 4G

在宿主机上再次附加硬盘

[root@kvm01 /opt] # virsh attach-disk web04 /opt/web04_add.qcow2 vdb --subdriver qcow2

在虚拟机里再次挂载扩容盘

fdisk -l

mount /dev/vdb /mnt

xfs_growfs /dev/vdb

在虚拟机里用xfs_growfs更新扩容盘超级块信息

作业1:扩容kvm虚拟机的根分区

4.9.2 kvm虚拟机在线热添加网卡

[root@kvm01 /opt] # virsh attach-interface web04 -- bridge br0

[root@kvm01 /opt] # virsh attach-interface web04 network default

[root@kvm01 /opt] # virsh attach-interface web04 network default --model virtio

mac 地址在 虚拟机里面 ip add 查看

删除

[root@kvm01 /opt] # virsh detach-interface web04 network --mac 52:54:00:4a:27:86

添加一块桥接模式 ens10: 随机

[root@kvm01 opt]# virsh attach-interface web04 bridge br0 #

添加一块nat模块

[root@kvm01 /opt] # virsh attach-interface web04 network default

添加网卡 指定驱动内型

[root@kvm01 /opt] # virsh attach-interface web04 network default --model virtio

写入配置文件

[root@kvm01 /opt] # virsh attach-interface web04 network default --model virtio --config

virsh attach-interface web04 --type bridge --source br0 --model virtio

virsh attach-interface web04 --type bridge --source br0 --model virtio --config

virsh detach-interface web04 --type bridge --mac 52:54:00:35:d3:714.9.3 kvm虚拟机在线热添加内存

缩小内存

[root@kvm01 /opt] # virsh setmem web04 --size 512M

增加内存

[root@kvm01 /opt] # virsh setmaxmem web04 --size 2048M

[root@kvm01 /opt] # vim vm_centos7.xml

4 <memory unit='KiB'>1048576</memory> 最大

5 <currentMemory unit='KiB'>1048576</currentMemory> 当前

-----

virt-install --virt-type kvm --os-type=linux --os-variant rhel7 --name web04 --memory 512,maxmemory=2048 --vcpus 1 --disk /data/web04.qcow2 --boot hd --network bridge=br0 --graphics vnc,listen=0.0.0.0 --noautoconsole

--------

临时热添加内存

virsh setmem web04 1024M

永久增大内存

virsh setmem web04 1024M --config

调整虚拟机内存最大值

virsh setmaxmem web04 4G4.9.4 kvm虚拟机在线热添加cpu

virt-install --virt-type kvm --os-type=linux --os-variant rhel7 --name web04 --memory 512,maxmemory=2048 --vcpus 1,maxvcpus=10 --disk /data/web04.qcow2 --boot hd --network bridge=br0 --graphics vnc,listen=0.0.0.0 --noautoconsole

服务器有几颗cpu怎么看?

NUMA node(s): 1

setvcpu:设置cpu的属性 支不支持vt,指令集

setvcpus:设置cpu的核数

<vcpu placement='static' current='1'>4</vcpu>

<vcpu placement='static'>1</vcpu>

热添加cpu核数

[root@kvm01 /opt] # virsh setvcpus web04 4

永久添加cpu核数

[root@kvm01 /opt] #virsh setvcpus web04 4 --config

调整虚拟机cpu的最大值

[root@kvm01 /opt] #virsh setvcpus web01 --maximum 4 --config4.10:virt-manager和kvm虚拟机热迁移(共享的网络文件系统)

冷迁移kvm虚拟机:配置文件,磁盘文件

热迁移kvm虚拟机:配置文件,nfs共享

1): yum groupinstall "GNOME Desktop" -y yum install openssh-askpass -y

yum install tigervnc-server -y

vncpasswd vncserver :1 vncserver -kill :1

2):kvm虚拟机热迁移 1:两边的环境(桥接网卡)| 主机名 | ip | 内存 | 网络 | 软件需求 | 虚拟化 |

|---|---|---|---|---|---|

| kvm01 | 10.0.0.11 | 2G | 创建br0桥接网卡 | kvm和nfs | 开启虚拟化 |

| kvm02 | 10.0.0.12 | 2G | 创建br0桥接网卡 | kvm和nfs | 开启虚拟化 |

| nfs01 | 10.0.0.31 | 1G | 无 | nfs | 无 |

2:实现共享存储(nfs)

==kvm虚拟机迁移的时候:一定要注意宿主机环境一致!!!==

https://oldqiang.com/archives/368.html

nfs 端口

yum install nfs-utils rpcbind -y

vim /etc/exports

/data 10.0.1.0/24(rw,async,no_root_squash,no_all_squash)

mkdir /data

systemctl start rpcbind

systemctl enable rpcbind

systemctl start nfs

systemctl enable nfs

systemctl start rpcbind nfs

#kvm01和kvm02

[root@kvm02 /opt] # showmount -e 10.0.1.31

mount -t nfs 10.0.0.31:/data /data

yum install libvirt qemu-kvm virt-install -y

[root@kvm02 ~] # systemctl start libvirtd

[root@kvm02 ~] # systemctl status libvirtd

服务端所有节点安装

yum install nfs-utils -y

桥接网卡

[root@kvm02 /opt] # virsh iface-bridge eth0 br0

冷迁移

备份

[root@kvm01 /opt] # virsh dumpxml web03 > web03.xml

相同目录以免改配置文件

[root@kvm01 /opt] # scp web03.xml web03.qcow2 root@10.0.1.12:/opt/

[root@kvm01 /opt] # scp centos7.qcow2 root@10.0.1.12:/opt/

导入 启动

[root@kvm02 /opt] # virsh define /opt/web03.xml

error: Failed to start domain web04

error: Cannot get interface MTU on 'br0': No such device

桥接网卡 3:在线热迁移

[root@kvm02 /] # mv /opt/ /srv/

[root@kvm02 /] # mv /opt/* /srv/

[root@kvm02 /] # mount -t nfs 10.0.1.31:/data /opt/

[root@kvm02 /] # mv /srv/* /opt/

[root@kvm01 /opt] # rm -rf /etc/libvirt/qemu/*.xml

[root@kvm01 /opt] # systemctl restart libvirtd

[root@kvm01 /opt] # vim /etc/hosts

10.0.1.11 kvm01

10.0.1.12 kvm02

[root@kvm02 /opt] # virsh migrate --live --verbose web04 qemu+ssh://10.0.1.11/system --unsafe

root@10.0.1.11's password:

Migration: [100 %]error: internal error: qemu unexpectedly closed the monitor

[root@kvm01 /opt] # virsh list --all

Id Name State

----------------------------------------------------

3 web04 running

[root@kvm01 /opt] # virsh dumpxml web04 > web04.xml

磁盘落地 才迁移

#临时迁移

virsh migrate --live --verbose web04 qemu+ssh://10.0.1.11/system --unsafe

#永久迁移

virsh migrate --live --verbose web03 qemu+ssh://10.0.0.100/system --unsafe --persistent --undefinesource

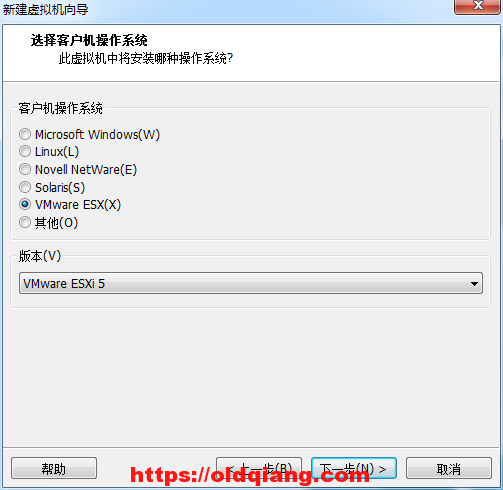

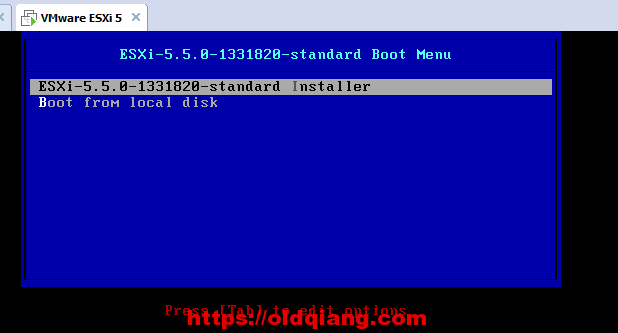

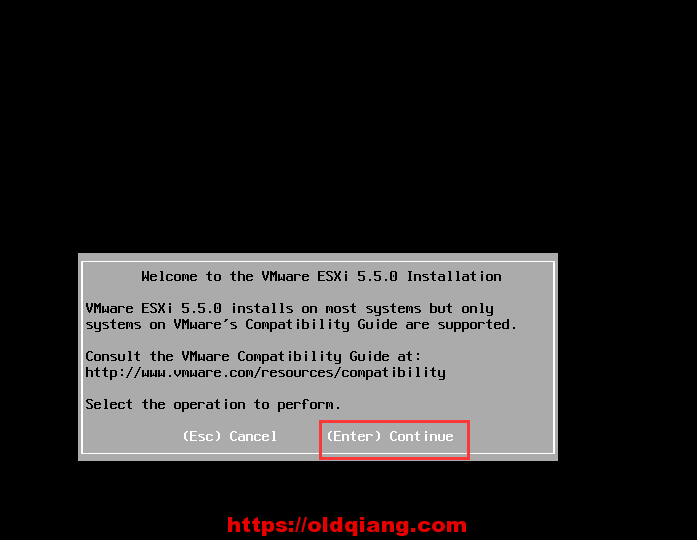

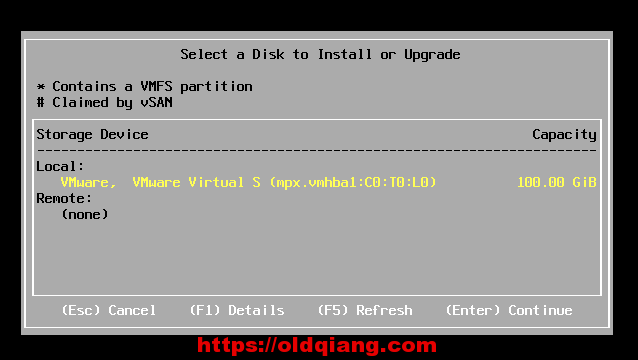

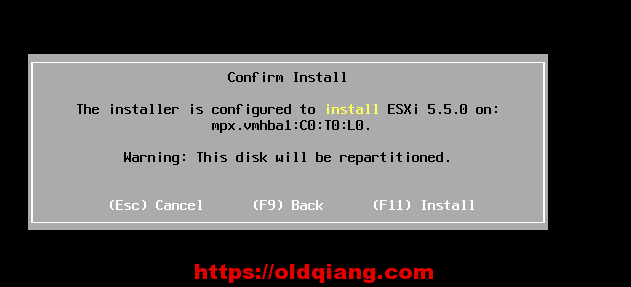

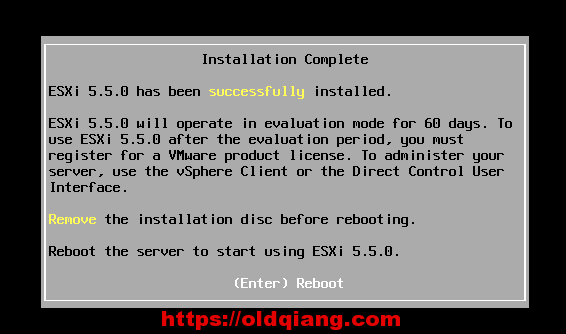

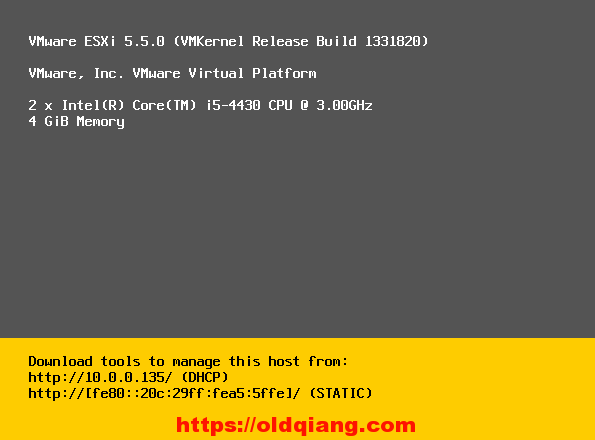

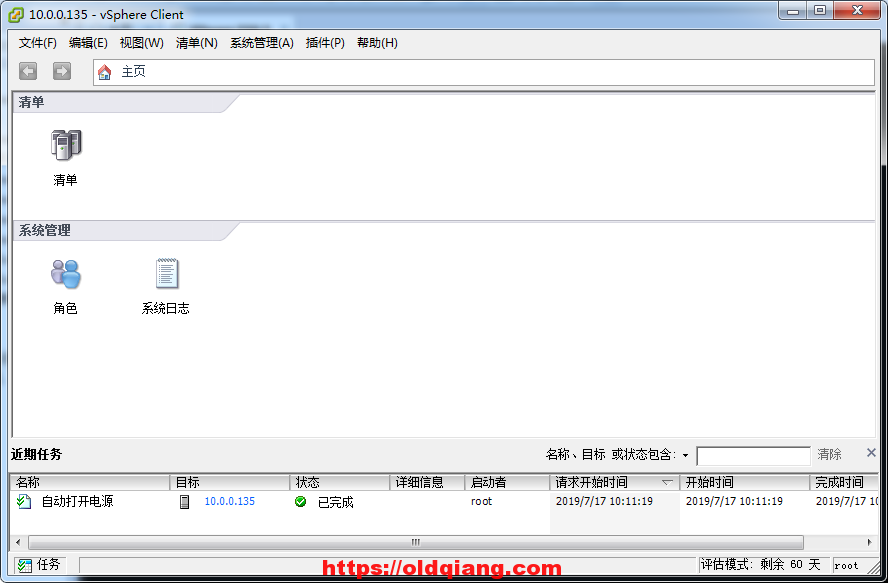

5: ESXI虚拟化系统

5.1 安装ESXI

5.1.1创建虚拟机

一路回车,直到

按F11

5.2启动ESXI

5.3 安装ESXI客户端

一路下一步就行

安装完成

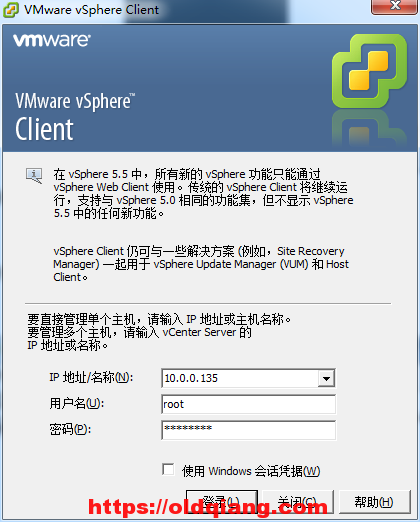

5.4使用客户端连接EXSI服务端

连接成功界面

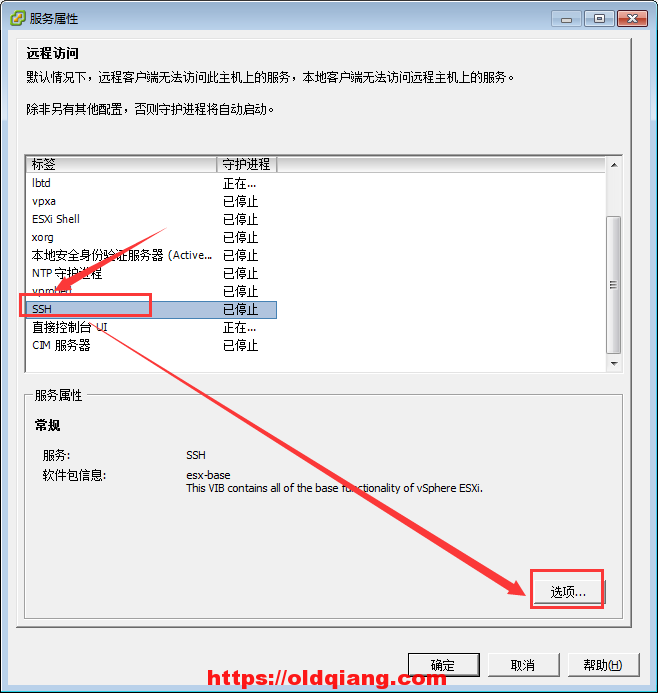

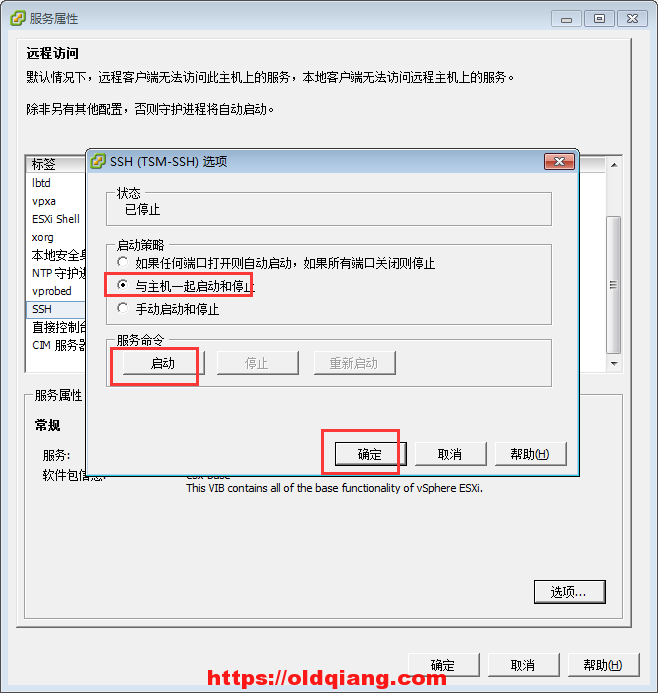

5.5了解ESXI的常用配置

开启ssh功能

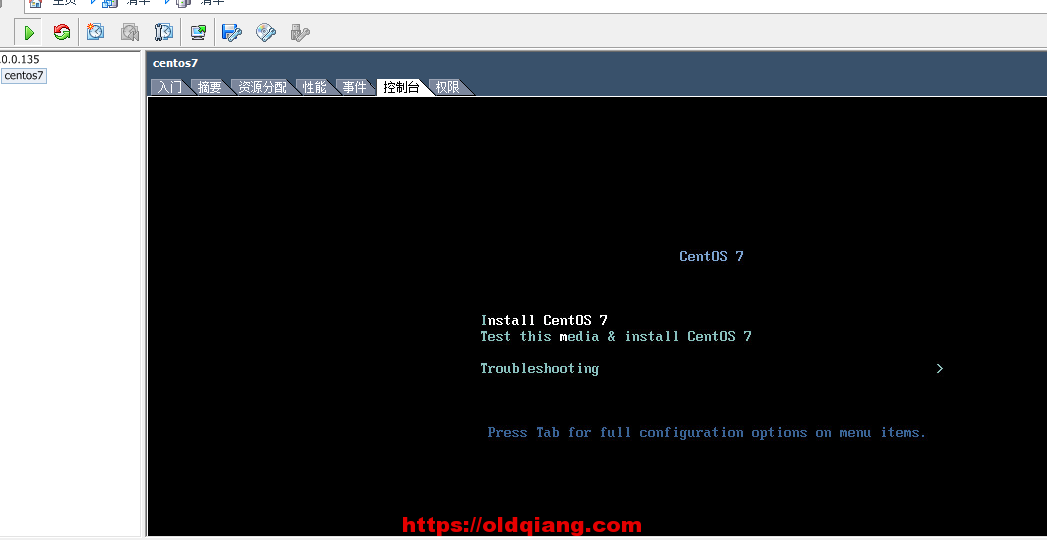

5.6安装一台ESXI虚拟机

5.7 将kvm虚拟机迁移到esxi上

kvm宿主机:

qemu-img convert -f qcow2 oldimage.qcow2 -O vmdk newimage.vmdk

\#可能不需要

vmkfstools -i oldimage.vmdk newimage.vmdk -d thin

5.8 将ESXI虚拟机迁移到kvm上

https://www.cnblogs.com/clsn/p/8510670.html

参考文献2:再kvm上,转换ova,导入配置,启动

a: 上传ova文件

b: yum install virt-v2v -y

c: virt-v2v -i ova /opt/CentOS7.6.ova -o local -os /srv -of qcow2

输出到本地 什么位置

-----

[root@kvm02 /opt] # virt-v2v -i ova /opt/CentOS7.6.ova -o local -os /srv -of qcow2

[ 0.0] Opening the source -i ova /opt/CentOS7.6.ova

[ 191.0] Initializing the target -o local -os /srv

[ 191.0] Copying disk 1/1 to /srv/CentOS7.6-sda (qcow2)

(41.16/100%)

--------

导入配置文件

d: virsh define /srv/CentOS7.6.xml

e: virsh start CentOS7.6

f: virsh edit CentOS7.6

<mac address='52:54:00:e9:e9:f3'/>

<source bridge='br0'/>

<graphics type='vnc' port='-1' autoport='yes' listen='0.0.0.0'>

<listen type='address' address='0.0.0.0'/>

</graphics>

g: virsh start CentOS7.6

将虚拟机导出ova文件

virt-v2v -i ova centos-dev-test01-v2v.ova -o local -os /opt/test -of qcow2

输出到本地 什么位置

kvm宿主机 2000台 查看每一个宿主机有多少台虚拟机? 查看每一个宿主机还剩多少资源? 查看每一台宿主机,每一个虚拟机的ip地址?

excel 资产管理 cmdb

kvm管理平台,数据库工具

信息:宿主机,总配置,剩余的总配置 虚拟机的信息,配置信息,ip地址,操作系统

带计费功能的kvm管理平台,openstack ceilometer计费 ecs IAAS层 自动化管理kvm宿主机,云主机定制化操作

服务器, 20核心 1T内存 96T

资源浪费,linux环境特别乱,,kvm虚拟机6.使用脚本自动化部署openstack M版

部署openstack 克隆一台openstack模板机:

all-in-one环境

4G内存,开启虚拟化,挂载centos7.6的光盘

虚拟机开机之后,修改ip地址为10.0.0.11

上传脚本openstack-mitaka-autoinstall.sh到/root目录

上传镜像:cirros-0.3.4-x86_64-disk.img到/root目录

上传配置文件:local_settings到/root目录

上传openstack_rpm.tar.gz到/root下,

[root@kvm ~] # tar xf openstack_rpm.tar.gz -C /opt/

[root@kvm ~] # mount /dev/cdrom /mnt

sh /root/openstack-mitaka-autoinstall.sh

大概10-30分钟左右

访问http://10.0.0.11/dashboard

域:default

用户名:admin

密码:ADMIN_PASS

注意: 在windows系统上修改host解析(10.0.0.11 controller)

-------------------------------------

添加node节点:

开启虚拟化——2G内存 光盘挂上

修改ip地址

hostnamectl set-hostname compute1

重新登录让新主机名生效

上传openstack_rpm.tar.gz到/root下,

tar xf openstack_rpm.tar.gz -C /opt/

mount /dev/cdrom /mnt

上传脚本openstack_compute_install.sh

sh openstack_compute_install.sh

openstack controller主控制节点,node节点, kvm宿主机

node节点, kvm宿主机

node节点, kvm宿主机

node节点, kvm宿主机

本地解析host文件

安装好系统的磁盘文件,为镜像

http://mirrors.ustc.edu.cn/centos-cloud/centos/7/images/CentOS-7-x86_64-GenericCloud-1605.qcow2

项目配额

sh /root/openstack-mitaka-autoinstall.sh 大概10-30分钟左右 访问http://10.0.0.11/dashboard 域:default 用户名:admin 密码:ADMIN_PASS

注意: 在windows系统上修改host解析(10.0.0.11 controller)

添加node节点: 修改ip地址 hostnamectl set-hostname compute1 重新登录让新主机名生效 上传openstack_rpm.tar.gz到/root下, tar xf openstack_rpm.tar.gz -C /opt/ 上传脚本 openstack_node_autoinstall.sh

sh openstack_node_autoinstall.sh

openstack controller主控制节点,node节点, kvm宿主机

node节点, kvm宿主机

node节点, kvm宿主机

node节点, kvm宿主机

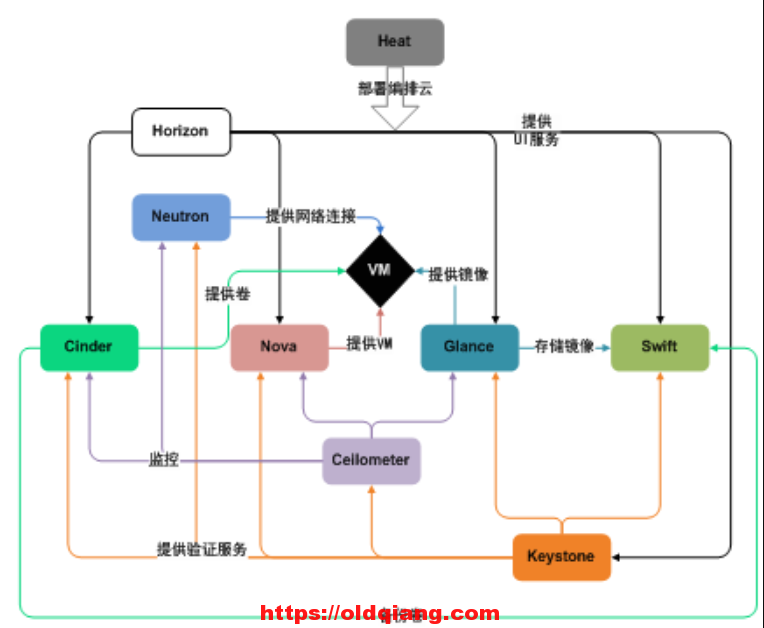

7:一步一步部署一个openstack集群

7.1 openstack基础架构

7.1:准备环境

| 主机名称 | 角色 | ip | 内存 |

|---|---|---|---|

| controller | 控制节点 | 10.0.0.11 | 3G或4G |

| compute1 | 计算节点 | 10.0.0.31 | 1G |

注意:主机之间相互host解析

7.1.1 时间同步

#服务端,controller节点

vim /etc/chrony.conf

allow 10.0.0.0/24

systemctl restart chronyd

#客户端,compute1节点

vim /etc/chrony.conf

server 10.0.0.11 iburst

systemctl restart chronyd

验证:同时执行date7.1.2:配置yum源,并安装客户端

#所有节点

#配置过程:

cd /opt/

rz -E

tar xf openstack_ocata_rpm.tar.gz

cd /etc/yum.repos.d/

mv *.repo /tmp

mv /tmp/CentOS-Base.repo .

vi openstack.repo

[openstack]

name=openstack

baseurl=file:///opt/repo

enable=1

gpgcheck=0

#验证:

yum clean all

yum install python-openstackclient -y7.1.3:安装数据库

#控制节点

yum install mariadb mariadb-server python2-PyMySQL -y

##openstack所有组件使用python开发,openstack在连接数据库需要用到python2-PyMySQL模块

#修改mariadb配置文件

vi /etc/my.cnf.d/openstack.cnf

[mysqld]

bind-address = 10.0.0.11

default-storage-engine = innodb

innodb_file_per_table = on

max_connections = 4096

collation-server = utf8_general_ci

character-set-server = utf8

#启动数据库

systemctl start mariadb

systemctl enable mariadb

#数据库安全初始化

mysql_secure_installation

回车

n

一路y7.1.3 安装消息队列rabbitmq

#控制节点

#安装消息队列

yum install rabbitmq-server

#启动rabbitmq

systemctl start rabbitmq-server.service

systemctl enable rabbitmq-server.service

#在rabbitmq创建用户

rabbitmqctl add_user openstack 123456

#为刚创建的openstack授权

rabbitmqctl set_permissions openstack ".*" ".*" ".*"7.1.4 安装memcache缓存

#控制节点

#安装memcache

yum install memcached python-memcached -y

##python-memcached是python连接memcache的模块插件

#配置

vim /etc/sysconfig/memcached

##修改最后一行

OPTIONS="-l 0.0.0.0"

#启动服务

systemctl start memcached

systemctl enable memcached7.2 安装keystone服务

#创库授权

##登录mysql

CREATE DATABASE keystone;

GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'localhost' IDENTIFIED BY '123456';

GRANT ALL PRIVILEGES ON keystone.* TO 'keystone'@'%' IDENTIFIED BY '123456';

#安装keystone服务

yum install openstack-keystone httpd mod_wsgi -y

##httpd配合mod_wsgi插件调用python项目

#修改keystone配置文件

cp /etc/keystone/keystone.conf{,.bak}

grep -Ev '^$|#' /etc/keystone/keystone.conf.bak >/etc/keystone/keystone.conf

#完整配置文件如下:

[root@controller ~]# vi /etc/keystone/keystone.conf

[DEFAULT]

[assignment]

[auth]

[cache]

[catalog]

[cors]

[cors.subdomain]

[credential]

[database]

connection = mysql+pymysql://keystone:123456@controller/keystone

[domain_config]

[endpoint_filter]

[endpoint_policy]

[eventlet_server]

[federation]

[fernet_tokens]

[healthcheck]

[identity]

[identity_mapping]

[kvs]

[ldap]

[matchmaker_redis]

[memcache]

[oauth1]

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_messaging_zmq]

[oslo_middleware]

[oslo_policy]

[paste_deploy]

[policy]

[profiler]

[resource]

[revoke]

[role]

[saml]

[security_compliance]

[shadow_users]

[signing]

[token]

provider = fernet

[tokenless_auth]

[trust]

#校验md5

md5sum /etc/keystone/keystone.conf

85d8b59cce0e4bd307be15ffa4c0cbd6 /etc/keystone/keystone.conf

#同步数据库

su -s /bin/sh -c "keystone-manage db_sync" keystone

##切到普通用户下,使用指定的shell执行某一条命令

##检查数据是否同步成功

mysql keystone -e 'show tables;'|wc -l

#初始化令牌凭据

keystone-manage fernet_setup --keystone-user keystone --keystone-group keystone

keystone-manage credential_setup --keystone-user keystone --keystone-group keystone

#初始化keystone身份认证服务

keystone-manage bootstrap --bootstrap-password 123456 \

--bootstrap-admin-url http://controller:35357/v3/ \

--bootstrap-internal-url http://controller:5000/v3/ \

--bootstrap-public-url http://controller:5000/v3/ \

--bootstrap-region-id RegionOne

#配置httpd

#小优化

echo "ServerName controller" >>/etc/httpd/conf/httpd.conf

#在httpd下添加keystone站点配置文件

ln -s /usr/share/keystone/wsgi-keystone.conf /etc/httpd/conf.d/

#启动httpd等效于keystone

systemctl start httpd

systemctl enable httpd

#声明环境变量

export OS_USERNAME=admin

export OS_PASSWORD=123456

export OS_PROJECT_NAME=admin

export OS_USER_DOMAIN_NAME=Default

export OS_PROJECT_DOMAIN_NAME=Default

export OS_AUTH_URL=http://controller:35357/v3

export OS_IDENTITY_API_VERSION=3

#验证keystone是否正常

openstack user list

#创建service的项目

openstack project create --domain default \

--description "Service Project" service

#修改/root/.bashrc文件

vi /root/.bashrc

export OS_USERNAME=admin

export OS_PASSWORD=123456

export OS_PROJECT_NAME=admin

export OS_USER_DOMAIN_NAME=Default

export OS_PROJECT_DOMAIN_NAME=Default

export OS_AUTH_URL=http://controller:35357/v3

export OS_IDENTITY_API_VERSION=37.3 安装glance服务

功能:管理镜像模板机

1:创库授权

CREATE DATABASE glance;

GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'localhost' \

IDENTIFIED BY '123456';

GRANT ALL PRIVILEGES ON glance.* TO 'glance'@'%' \

IDENTIFIED BY '123456';

2:keystone上创建用户,关联角色

openstack user create --domain default --password 123456 glance

openstack role add --project service --user glance admin

3:keystone上创建服务,注册api地址

openstack service create --name glance \

--description "OpenStack Image" image

openstack endpoint create --region RegionOne \

image public http://controller:9292

openstack endpoint create --region RegionOne \

image internal http://controller:9292

openstack endpoint create --region RegionOne \

image admin http://controller:9292

4:安装服务软件包

yum install openstack-glance -y

5:修改配置文件(连接数据库,keystone授权)

##glance-api 上传下载删除

##glance-registry 修改镜像的属性 x86 根分区大小

#修改glance-api配置文件

cp /etc/glance/glance-api.conf{,.bak}

grep -Ev '^$|#' /etc/glance/glance-api.conf.bak >/etc/glance/glance-api.conf

vim /etc/glance/glance-api.conf

[DEFAULT]

[cors]

[cors.subdomain]

[database]

connection = mysql+pymysql://glance:123456@controller/glance

[glance_store]

stores = file,http

default_store = file

filesystem_store_datadir = /var/lib/glance/images/

[image_format]

[keystone_authtoken]

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = glance

password = 123456

[matchmaker_redis]

[oslo_concurrency]

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_messaging_zmq]

[oslo_middleware]

[oslo_policy]

[paste_deploy]

flavor = keystone

[profiler]

[store_type_location_strategy]

[task]

[taskflow_executor]

##校验

md5sum /etc/glance/glance-api.conf

a42551f0c7e91e80e0702ff3cd3fc955 /etc/glance/glance-api.conf

##修改glance-registry.conf配置文件

cp /etc/glance/glance-registry.conf{,.bak}

grep -Ev '^$|#' /etc/glance/glance-registry.conf.bak >/etc/glance/glance-registry.conf

vim /etc/glance/glance-registry.conf

[DEFAULT]

[database]

connection = mysql+pymysql://glance:123456@controller/glance

[keystone_authtoken]

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = glance

password = 123456

[matchmaker_redis]

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_messaging_zmq]

[oslo_policy]

[paste_deploy]

flavor = keystone

[profiler]

##校验

md5sum /etc/glance/glance-registry.conf

5b28716e936cc7a0ab2a841c914cd080 /etc/glance/glance-registry.conf

6:同步数据库(创表)

su -s /bin/sh -c "glance-manage db_sync" glance

mysql glance -e 'show tables;'|wc -l

7:启动服务

systemctl enable openstack-glance-api.service \

openstack-glance-registry.service

systemctl start openstack-glance-api.service \

openstack-glance-registry.service

#验证端口

netstat -lntup|grep -E '9191|9292'

8:命令行上传镜像

wget http://download.cirros-cloud.net/0.3.5/cirros-0.3.5-x86_64-disk.img

openstack image create "cirros" --file cirros-0.3.5-x86_64-disk.img --disk-format qcow2 --container-format bare --public

##验证

ll /var/lib/glance/images/

#或

openstack image list7.4 安装nova服务

7.4.1 控制节点安装nova服务

1:创库授权

CREATE DATABASE nova_api;

CREATE DATABASE nova;

CREATE DATABASE nova_cell0;

GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'localhost' \

IDENTIFIED BY '123456';

GRANT ALL PRIVILEGES ON nova_api.* TO 'nova'@'%' \

IDENTIFIED BY '123456';

GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'localhost' \

IDENTIFIED BY '123456';

GRANT ALL PRIVILEGES ON nova.* TO 'nova'@'%' \

IDENTIFIED BY '123456';

GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'localhost' \

IDENTIFIED BY '123456';

GRANT ALL PRIVILEGES ON nova_cell0.* TO 'nova'@'%' \

IDENTIFIED BY '123456';2:keystone上创建用户,关联角色

openstack user create --domain default --password 123456 nova

openstack role add --project service --user nova admin

#placement 追踪云主机的资源使用具体情况

openstack user create --domain default --password 123456 placement

openstack role add --project service --user placement admin3:keystone上创建服务,http访问地址(api地址)

openstack service create --name nova --description "OpenStack Compute" compute

openstack endpoint create --region RegionOne compute public http://controller:8774/v2.1

openstack endpoint create --region RegionOne compute internal http://controller:8774/v2.1

openstack endpoint create --region RegionOne compute admin http://controller:8774/v2.1

openstack service create --name placement --description "Placement API" placement

openstack endpoint create --region RegionOne placement public http://controller:8778

openstack endpoint create --region RegionOne placement internal http://controller:8778

openstack endpoint create --region RegionOne placement admin http://controller:87784:安装服务软件包

yum install openstack-nova-api openstack-nova-conductor \

openstack-nova-console openstack-nova-novncproxy \

openstack-nova-scheduler openstack-nova-placement-api -y5:修改配置文件(连接数据库,keystone授权)

#修改nova配置文件

vim /etc/nova/nova.conf

[DEFAULT]

##启动nova服务api和metadata的api

enabled_apis = osapi_compute,metadata

##连接消息队列rabbitmq

transport_url = rabbit://openstack:123456@controller

my_ip = 10.0.0.11

#启动neutron网络服务,禁用nova内置防火墙

use_neutron = True

firewall_driver = nova.virt.firewall.NoopFirewallDriver

[api]

auth_strategy = keystone

[api_database]

connection = mysql+pymysql://nova:123456@controller/nova_api

[barbican]

[cache]

[cells]

[cinder]

[cloudpipe]

[conductor]

[console]

[consoleauth]

[cors]

[cors.subdomain]

[crypto]

[database]

connection = mysql+pymysql://nova:123456@controller/nova

[ephemeral_storage_encryption]

[filter_scheduler]

[glance]

api_servers = http://controller:9292

[guestfs]

[healthcheck]

[hyperv]

[image_file_url]

[ironic]

[key_manager]

[keystone_authtoken]

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = nova

password = 123456

[libvirt]

[matchmaker_redis]

[metrics]

[mks]

[neutron]

[notifications]

[osapi_v21]

[oslo_concurrency]

lock_path = /var/lib/nova/tmp

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_messaging_zmq]

[oslo_middleware]

[oslo_policy]

[pci]

#追踪虚拟机使用资源情况

[placement]

os_region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://controller:35357/v3

username = placement

password = 123456

[quota]

[rdp]

[remote_debug]

[scheduler]

[serial_console]

[service_user]

[spice]

[ssl]

[trusted_computing]

[upgrade_levels]

[vendordata_dynamic_auth]

[vmware]

#vnc的连接信息

[vnc]

enabled = true

vncserver_listen = $my_ip

vncserver_proxyclient_address = $my_ip

[workarounds]

[wsgi]

[xenserver]

[xvp]

#修改httpd配置文件

vi /etc/httpd/conf.d/00-nova-placement-api.conf

在16行这一行上面增加以下内容

= 2.4>

Require all granted

Order allow,deny

Allow from all

#重启httpd

systemctl restart httpd6:同步数据库(创表)

su -s /bin/sh -c "nova-manage api_db sync" nova

su -s /bin/sh -c "nova-manage cell_v2 map_cell0" nova

su -s /bin/sh -c "nova-manage cell_v2 create_cell --name=cell1 --verbose" nova

su -s /bin/sh -c "nova-manage db sync" nova

#检查

nova-manage cell_v2 list_cells7:启动服务

systemctl enable openstack-nova-api.service \

openstack-nova-consoleauth.service openstack-nova-scheduler.service \

openstack-nova-conductor.service openstack-nova-novncproxy.service

systemctl start openstack-nova-api.service \

openstack-nova-consoleauth.service openstack-nova-scheduler.service \

openstack-nova-conductor.service openstack-nova-novncproxy.service

#检查

openstack compute service list7.4.2计算节点安装nova服务

1:安装

yum install openstack-nova-compute -y2:配置

#修改配置文件/etc/nova/nova.conf

vim /etc/nova/nova.conf

[DEFAULT]

enabled_apis = osapi_compute,metadata

transport_url = rabbit://openstack:123456@controller

my_ip = 10.0.0.31

use_neutron = True

firewall_driver = nova.virt.firewall.NoopFirewallDriver

[api]

auth_strategy = keystone

[api_database]

[barbican]

[cache]

[cells]

[cinder]

[cloudpipe]

[conductor]

[console]

[consoleauth]

[cors]

[cors.subdomain]

[crypto]

[database]

[ephemeral_storage_encryption]

[filter_scheduler]

[glance]

api_servers = http://controller:9292

[guestfs]

[healthcheck]

[hyperv]

[image_file_url]

[ironic]

[key_manager]

[keystone_authtoken]

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = nova

password = 123456

[libvirt]

[matchmaker_redis]

[metrics]

[mks]

[neutron]

[notifications]

[osapi_v21]

[oslo_concurrency]

lock_path = /var/lib/nova/tmp

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_messaging_zmq]

[oslo_middleware]

[oslo_policy]

[pci]

[placement]

os_region_name = RegionOne

project_domain_name = Default

project_name = service

auth_type = password

user_domain_name = Default

auth_url = http://controller:35357/v3

username = placement

password = 123456

[quota]

[rdp]

[remote_debug]

[scheduler]

[serial_console]

[service_user]

[spice]

[ssl]

[trusted_computing]

[upgrade_levels]

[vendordata_dynamic_auth]

[vmware]

[vnc]

enabled = True

vncserver_listen = 0.0.0.0

vncserver_proxyclient_address = $my_ip

novncproxy_base_url = http://controller:6080/vnc_auto.html

[workarounds]

[wsgi]

[xenserver]

[xvp]3:启动

systemctl start libvirtd openstack-nova-compute.service systemctl enable libvirtd openstack-nova-compute.service

4:控制节点上验证

openstack compute service list

5:在控制节点上

发现计算节点:

su -s /bin/sh -c “nova-manage cell_v2 discover_hosts –verbose” nova

7.5 安装neutron服务

7.5.1 在控制节点上安装neutron服务

1:创库授权

CREATE DATABASE neutron;

GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'localhost' \

IDENTIFIED BY 'NEUTRON_DBPASS';

GRANT ALL PRIVILEGES ON neutron.* TO 'neutron'@'%' \

IDENTIFIED BY 'NEUTRON_DBPASS';2:keystone上创建用户,关联角色

openstack user create --domain default --password NEUTRON_PASS neutron

openstack role add --project service --user neutron admin3:keystone上创建服务,http访问地址(api地址)

openstack service create --name neutron \

--description "OpenStack Networking" network

openstack endpoint create --region RegionOne \

network public http://controller:9696

openstack endpoint create --region RegionOne \

network internal http://controller:9696

openstack endpoint create --region RegionOne \

network admin http://controller:96964:安装服务软件包

#选择网络选项1

yum install openstack-neutron openstack-neutron-ml2 \

openstack-neutron-linuxbridge ebtables -y5:修改配置文件(连接数据库,keystone授权)

#修改neutron.conf

vim /etc/neutron/neutron.conf

[DEFAULT]

core_plugin = ml2

service_plugins =

transport_url = rabbit://openstack:123456@controller

auth_strategy = keystone

notify_nova_on_port_status_changes = true

notify_nova_on_port_data_changes = true

[agent]

[cors]

[cors.subdomain]

[database]

connection = mysql+pymysql://neutron:NEUTRON_DBPASS@controller/neutron

[keystone_authtoken]

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = NEUTRON_PASS

[matchmaker_redis]

[nova]

auth_url = http://controller:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = nova

password = 123456

[oslo_concurrency]

lock_path = /var/lib/neutron/tmp

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_messaging_zmq]

[oslo_middleware]

[oslo_policy]

[qos]

[quotas]

[ssl]

##修改ml2_conf.ini

vim /etc/neutron/plugins/ml2/ml2_conf.ini

[DEFAULT]

[ml2]

type_drivers = flat,vlan

tenant_network_types =

mechanism_drivers = linuxbridge

extension_drivers = port_security

[ml2_type_flat]

flat_networks = provider

[ml2_type_geneve]

[ml2_type_gre]

[ml2_type_vlan]

[ml2_type_vxlan]

[securitygroup]

enable_ipset = true

##编辑linuxbridge_agent.ini

vim /etc/neutron/plugins/ml2/linuxbridge_agent.ini

[DEFAULT]

[agent]

[linux_bridge]

physical_interface_mappings = provider:eth0

[securitygroup]

enable_security_group = true

firewall_driver = neutron.agent.linux.iptables_firewall.IptablesFirewallDriver

[vxlan]

enable_vxlan = false

##编辑dhcp_agent.ini

vim /etc/neutron/dhcp_agent.ini

[DEFAULT]

interface_driver = linuxbridge

dhcp_driver = neutron.agent.linux.dhcp.Dnsmasq

enable_isolated_metadata = true

[agent]

[ovs]

##编辑

vim /etc/neutron/metadata_agent.ini

[DEFAULT]

nova_metadata_ip = controller

metadata_proxy_shared_secret = METADATA_SECRET

[agent]

[cache]

####编辑控制节点。nova配置文件

vim /etc/nova/nova.conf

[neutron]

url = http://controller:9696

auth_url = http://controller:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = NEUTRON_PASS

service_metadata_proxy = true

metadata_proxy_shared_secret = METADATA_SECRET

#再次验证控制节点nova配置文件

md5sum /etc/nova/nova.conf

2c5e119c2b8a2f810bf5e0e48c099047 /etc/nova/nova.conf

6:同步数据库(创表)

ln -s /etc/neutron/plugins/ml2/ml2_conf.ini /etc/neutron/plugin.ini

su -s /bin/sh -c "neutron-db-manage --config-file /etc/neutron/neutron.conf \

--config-file /etc/neutron/plugins/ml2/ml2_conf.ini upgrade head" neutron

7:启动服务

systemctl restart openstack-nova-api.service

systemctl enable neutron-server.service \

neutron-linuxbridge-agent.service neutron-dhcp-agent.service \

neutron-metadata-agent.service

systemctl restart neutron-server.service \

neutron-linuxbridge-agent.service neutron-dhcp-agent.service \

neutron-metadata-agent.service

#验证方法

openstack network agent list7.5.2 在计算节点上安装neutron服务

1:安装

yum install openstack-neutron-linuxbridge ebtables ipset2:配置

#修改neutron.conf

vim /etc/neutron/neutron.conf

[DEFAULT]

transport_url = rabbit://openstack:123456@controller

auth_strategy = keystone

[agent]

[cors]

[cors.subdomain]

[database]

[keystone_authtoken]

auth_uri = http://controller:5000

auth_url = http://controller:35357

memcached_servers = controller:11211

auth_type = password

project_domain_name = default

user_domain_name = default

project_name = service

username = neutron

password = NEUTRON_PASS

[matchmaker_redis]

[nova]

[oslo_concurrency]

lock_path = /var/lib/neutron/tmp

[oslo_messaging_amqp]

[oslo_messaging_kafka]

[oslo_messaging_notifications]

[oslo_messaging_rabbit]

[oslo_messaging_zmq]

[oslo_middleware]

[oslo_policy]

[qos]

[quotas]

[ssl]

##linux_agent配置文件

scp -rp 10.0.0.11:/etc/neutron/plugins/ml2/linuxbridge_agent.ini /etc/neutron/plugins/ml2/linuxbridge_agent.ini

##在计算节点上,再次修改nova.conf

vim /etc/nova/nova.conf

[neutron]

url = http://controller:9696

auth_url = http://controller:35357

auth_type = password

project_domain_name = default

user_domain_name = default

region_name = RegionOne

project_name = service

username = neutron

password = NEUTRON_PASS

#校验

md5sum /etc/nova/nova.conf

91cc8aa0f7e33d7b824301cc894e90f1 /etc/nova/nova.conf3:启动

systemctl restart openstack-nova-compute.service

systemctl enable neutron-linuxbridge-agent.service

systemctl start neutron-linuxbridge-agent.service7.6 安装dashboard服务

#计算节点安装dashboard

1:安装

yum install openstack-dashboard -y2:配置

rz local_settings

cat local_settings >/etc/openstack-dashboard/local_settings

3:启动

systemctl start httpd

4: 访问dashboard

#创建网络

neutron net-create --shared --provider:physical_network provider --provider:network_type flat WAN

neutron subnet-create --name subnet-wan --allocation-pool \

start=10.0.0.100,end=10.0.0.200 --dns-nameserver 223.5.5.5 \

--gateway 10.0.0.254 WAN 10.0.0.0/24

#创建硬件配置方案

openstack flavor create --id 0 --vcpus 1 --ram 64 --disk 1 m1.nano

#上传秘钥对

ssh-keygen -q -N "" -f ~/.ssh/id_rsa

openstack keypair create --public-key ~/.ssh/id_rsa.pub mykey#安全组开放ping和ssh

openstack security group rule create –proto icmp default

openstack security group rule create –proto tcp –dst-port 22 default

- ### 7.8 安装块存储cinder服务 云硬盘服务